Identifying male or female based on voice using XGBoost and ML

Abstract

Recently, natural language has taken over the world with voice assistants like Alexa, Siri, Cortana etc, all serve the same purpose that is speech recognition and assisting the humans in all kinds of ways. Although the front end of this might look pretty and easier to get answers but the backend which involving in how does the data given by humans pre-processed and given back to them Is very complex to understand. Similarly, Identifying the gender of a person using audio files might get tricky but it is possible if we have a proper dataset. The input which we give to the model will be in the type of csv format of wav file. The input data consists of frequency of audio, modulation index and some other important factors responsible for voice audio of a person. More than 3 models are trained to compare which is performing better and giving us the best results. (KNearest Neighbour, Artificial Neural Network and Boost)

Code Description & Execution

Algorithm Description

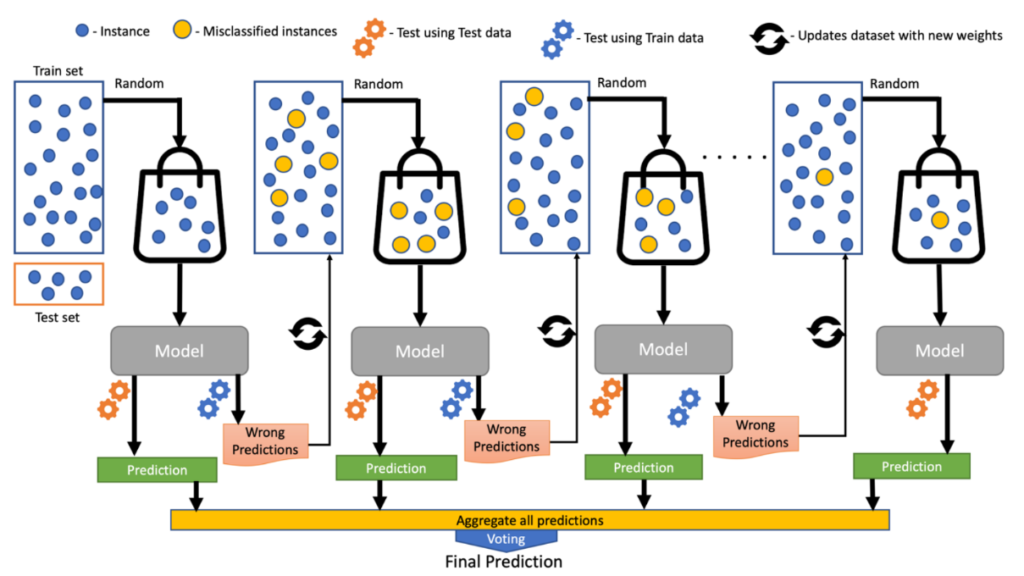

Extreme Gradient Boosting Classifier:

Extreme Gradient Boosting is a boosting algorithm which is introduced as a library written in C++, to optimize the existing gradient boosting algorithm. In this algorithm, decision trees are sued sequentially and weights are assigned to each independent variable. The weights are increased for the variables of the tree which has predicted wrong class and these variables are fed to the second tree. Further down, all these individuals’ classifiers/predictors then ensemble to give a more precise result. This algorithm can work with regression and classification.

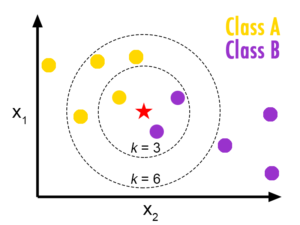

Nearest Neighbour:

KNN or K Nearest neighbours is a basic yet an efficient algorithm which is being used in most of the Machine learning application. Since it is a non-parametric i.e. This algorithm doesn’t make any underlying assumption like other algorithms do, such as having specify distribution of data to work with. So, this makes it very easy and understandable to all the users who are using it. The Technique KNN applies in predicting on new data is where it finds the nearest neighbours for the given point and takes a majority voting, whichever class is resided near to the new point, it will be considered as the new class for the new data point.

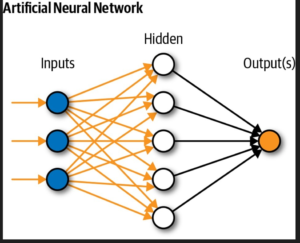

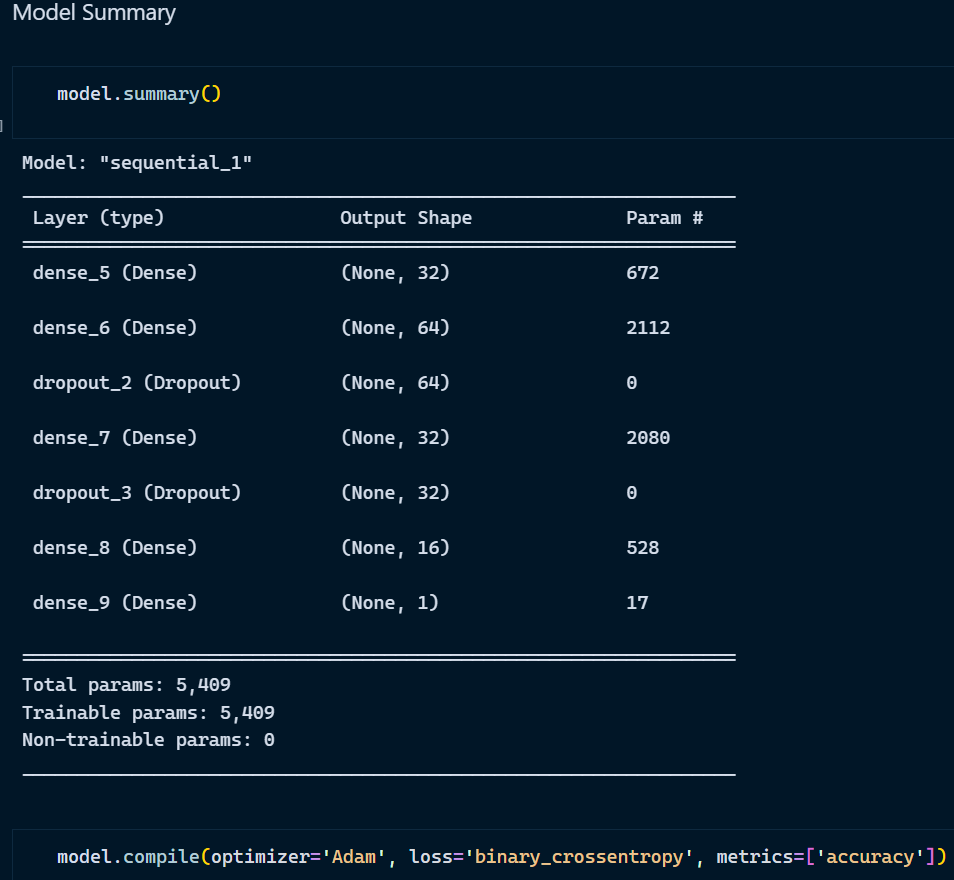

Artificial Neural Network:

ANN is a neural network which tries to perform tasks like a human does, think like a human brain. Just like a human brain understands things after learning by watching things or by experience, ANN does the same as well. It learns with experience of going through the dataset multiple times and understands the relations, hidden features and parameters. ANN is helpful in doing regression, classification tasks and performs extremely well on huge datasets achieving high accuracy.

Input Layer:

Whatever input you pass for the model to learn goes through this layer of neural network for performing calculations.

Hidden Layer:

The layer as the name suggests hidden because when we see the real time application we only focus on the input and output, we do not focus on how things happen. Hidden layer performs calculations, does processing, understands the hidden features and updates weights to get the best possible accuracy.

Output Layer:

The input passes through hidden layer where processing happens and output is returned.

For detailed explanation of ANN, refer to this blog https://ai.plainenglish.io/artificial-neural-network-ann-edec89ce4daa

How to Execute?

Make sure you have checked the add to path tick boxes while installing python, anaconda.

Refer to this link, if you are just starting and want to know how to install anaconda.

If you already have anaconda and want to check on how to create anaconda environment, refer to this article set up jupyter notebook. You can skip the article if you have knowledge of installing anaconda, setting up environment and installing requirements.txt

- Install the prerequisites/software’s required to execute the code from reading the above blog which is provided in the link above.

- Press windows key and type in anaconda prompt a terminal opens up.

- Before executing the code, we need to create a specific environment which allows us to install the required libraries necessary for our project.

- Type conda create -name “env_name”, e.g.: conda create -name project_1

- Type conda activate “env_name, e.g.: conda activate project_1

- Go to the directory where your requirement.txt file is present.

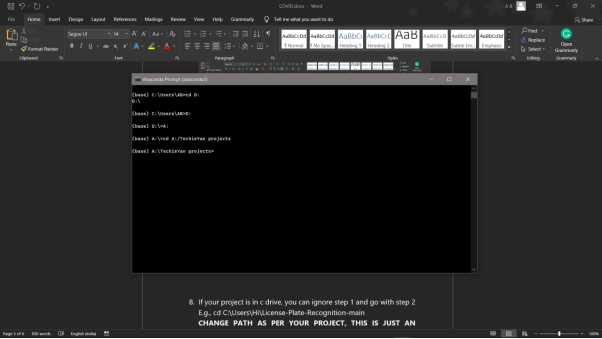

- cd <>. E.g., If my file is in d drive, then

- d:

7.cd d:\License-Plate-Recognition–main #CHANGE PATH AS PER YOUR PROJECT, THIS IS JUST AN EXAMPLE

8. If your project is in c drive, you can ignore step 5 and go with step 6

9. g., cd C:\Users\Hi\License-Plate-Recognition-main

10. CHANGE PATH AS PER YOUR PROJECT, THIS IS JUST AN EXAMPLE

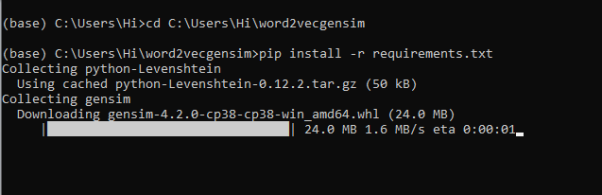

11. Run pip install -r requirements.txt or conda install requirements.txt (Requirements.txt is a text file consisting of all the necessary libraries required for executing this python file. If it gives any error while installing libraries, you might need to install them individually.)

12. To run .py file make sure you are in the anaconda terminal with the anaconda path being set as your executable file/folder is being saved. Then type python main.pyin the terminal, before running open the main.py and make sure to change the path of the dataset.

13. If you would like to run .ipynb file, Please follow the link to setup and open jupyter notebook, You will be redirected to the local server there you can select which ever .ipynb file you’d like to run and click on it and execute each cell one by one by pressing shift+enter.

Please follow the above links on how to install and set up anaconda environment to execute files.

Data Description

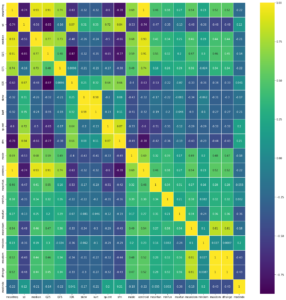

The Dataset is collected form Kaggle Repository which contains 3168 Instances with 21 features. Some of the features which correlates better with the model we are trying to build are:

meanfreq: mean frequency of the voice audio of the person (in kHz)

* sd: standard deviation of the frequency of the voice audio

* Median: median frequency of the voice audio (in kHz)

* Q25: first quantile (in kHz)

* Q75: third quantile (in kHz)

* IQR: interquantile range (in kHz)

* Skew: Skewness refers to a distortion or asymmetry that deviates from the symmetrical bell curve, or normal distribution

* sp.ent: spectral entropy

* mode: mode frequency

* centroid: frequency centroid (see specprop)

* meanfun: mean fundamental frequency measured across acoustic signal

* minfun: minimum fundamental frequency measured across acoustic signal

* maxfun: maximum fundamental frequency measured across acoustic signal

* mindom: minimum of dominant frequency measured across acoustic signal

* maxdom: maximum of dominant frequency measured across acoustic signal

This study’s goal is to predict whether the voice given as an input is of male or female.

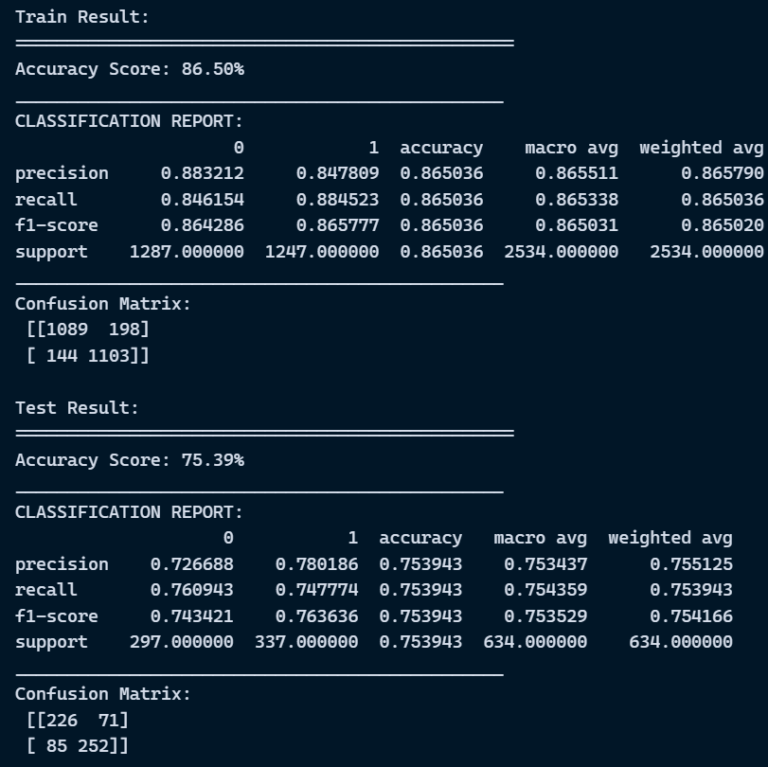

Final Results

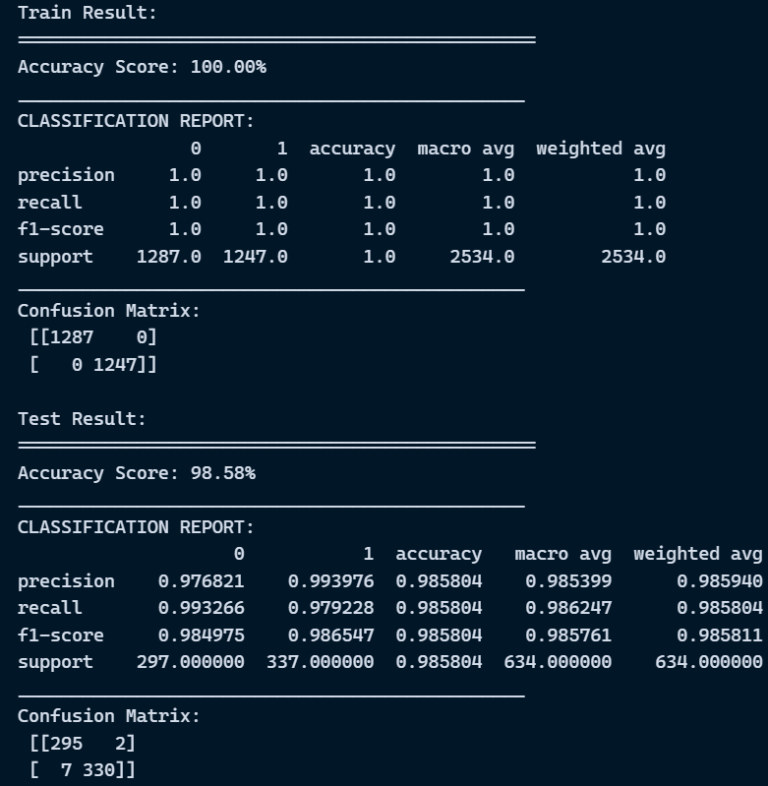

- XGBoost Classifier

2. KNearest Neighbours

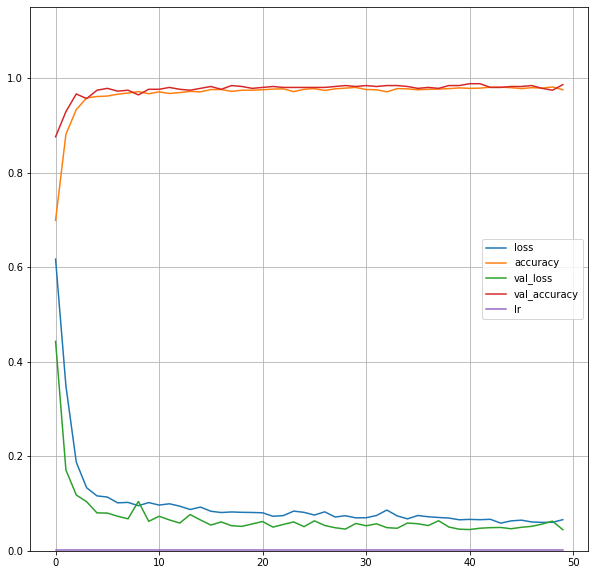

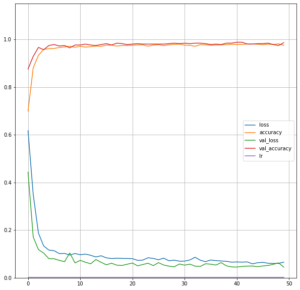

3. Artificial Neural Network

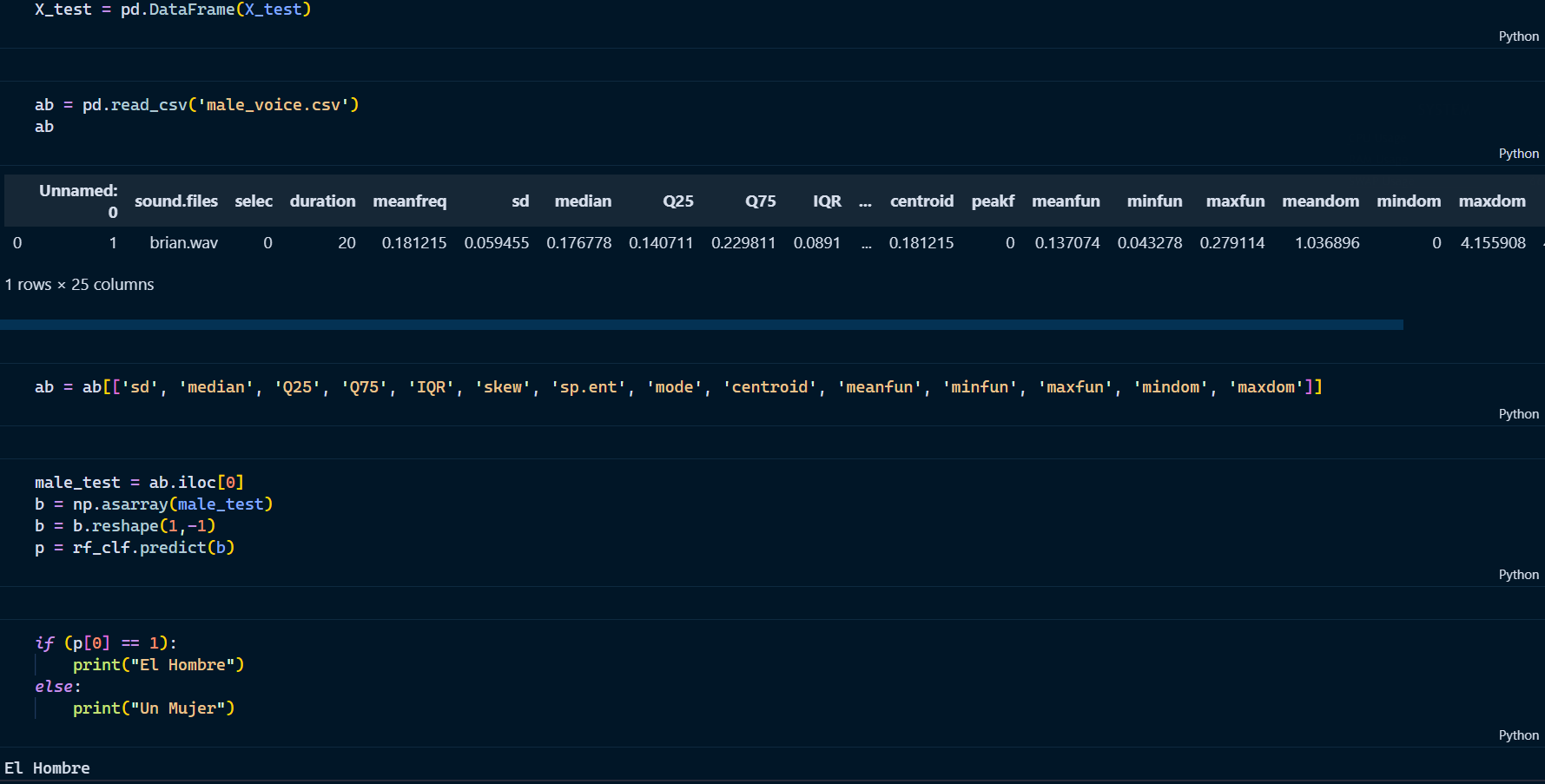

Testing on new data

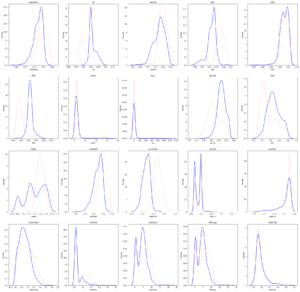

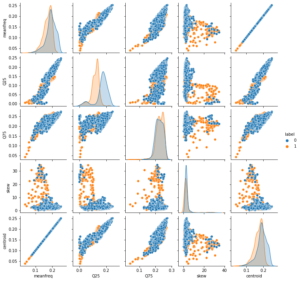

Exploratory Data Analysis

- Correlation heatmap

2. Kernel Density Plot

3. Pair Plot

4. Evaluation plot

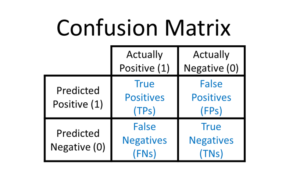

Evaluation Metrics

Evaluation metrics are considered as one of the most important steps in any machine learning and deep learning projects, where it will allow us to evaluate how good our model is performing on the new data or on unseen data. There are a lot of evaluation metrics which can be used in order to assess how good our model is performing such as roc_auc_curve, f1_score, recall, precision and each of which work for specific problem we deal. So, for our project we have gone with confusion matrix and classification report which helps us to evaluate not just the accuracy of the model but also the other metrics such as precision, recall and f1_score.

Confusion matrix:

Binary cross entropy/logloss:

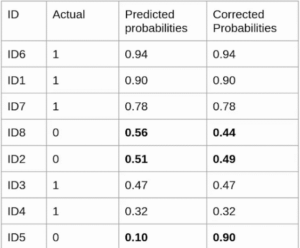

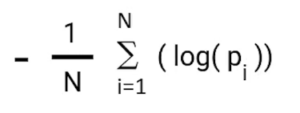

binary_cross_entropy/log_loss, metric basically compares the actual class with the predicted probabilities and then it calculates a corrected probability by subtracting it with the probability of a datapoint belonging to class1 with the predicted probability, i.e., for the case of ID8 it is actually class 0, but the probability is of class 1 is 0.56, so we subtract (1 – 0.56), we get 0.44 that is our corrected probability. Then Log_loss is calculated by applying log transformation on each of the calculated_probablities. The the average of the negative corrected_probablities are taken which will gives us the log_loss/binary_cross_entropy, the lower the value the better our model is performing.

Log_loss calculation for corrected_probablities

Log_Loss formula without calculating corrected_probablities

![]()

Reference:

Issues you may face while executing the code

- We might face an issue while installing specific libraries, in this case, you might need to install the libraires manually. Example: pip install “module_name/library” i.e., pip install pandas

- Make sure you have the latest or specific version of python, since sometimes it might cause version mismatch.

- Adding path to environment variables in order to run python files and anaconda environment in code editor, specifically in any code editor.

- Make sure to change the paths in the code accordingly where your dataset/model is saved.

- Test data should be in a specific format, because the model trained is with a specific important column, so while testing also we need to pass the same columns.

Refer to the Below links to get more details on installing python and anaconda and how to configure it.

https://techieyantechnologies.com/2022/07/how-to-install-anaconda/